EVERY. TIME. https://twitter.com/NLRG_/status/1338622300858552322

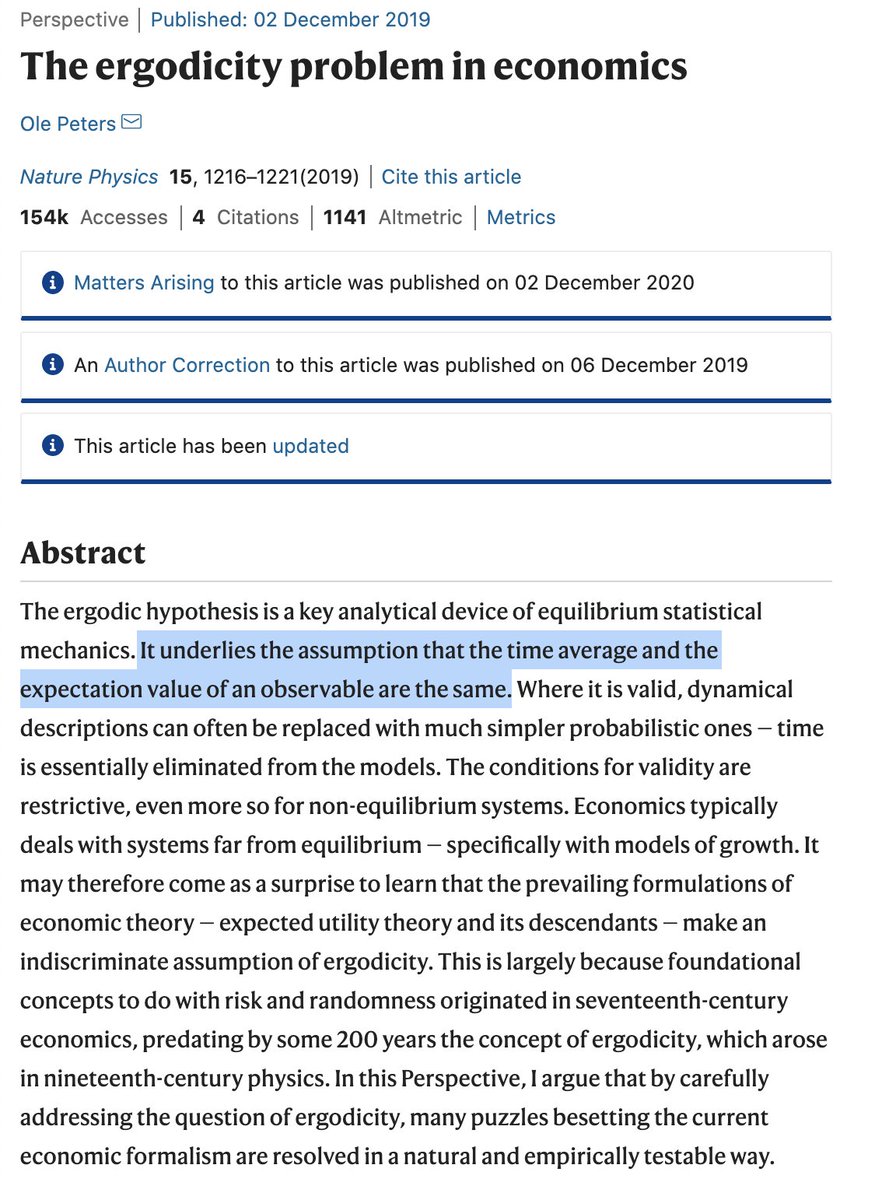

this is a good thread by @ben_golub but I'd also like to make clear that Peters is very much a crank, and is using an extremely deceptive mathematical trick (and strawman) as a part of his analysis

and... I do literally mean a trick. https://twitter.com/ben_golub/status/1338175642932715520

and... I do literally mean a trick. https://twitter.com/ben_golub/status/1338175642932715520

here is the full paper so you can see it for yourself:

https://www.nature.com/articles/s41567-019-0732-0

and the intro... ugh

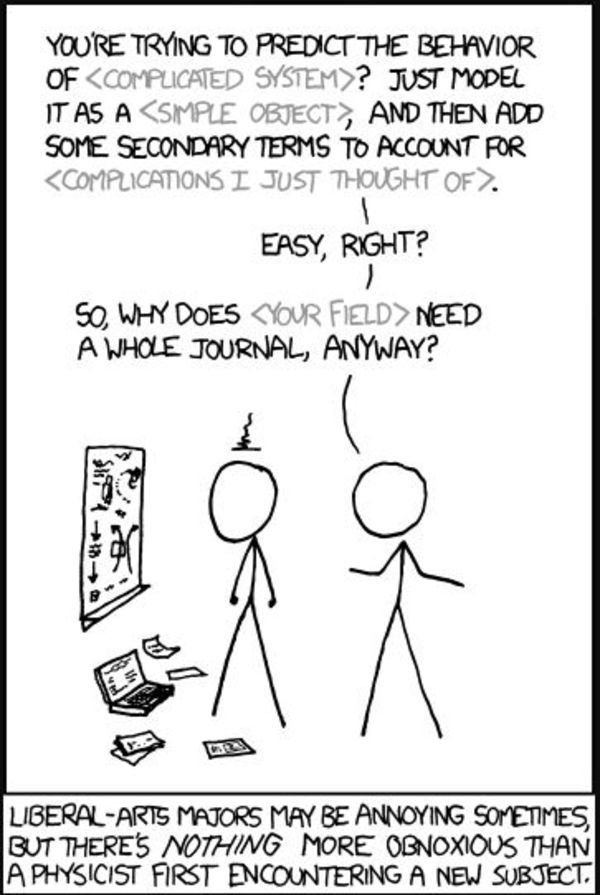

"wait, so I didn't study bounded repeated auctions among different risk accepting individuals for my degree?"

apparently not, Blue. apparently not.

anyways, onto the math

https://www.nature.com/articles/s41567-019-0732-0

and the intro... ugh

"wait, so I didn't study bounded repeated auctions among different risk accepting individuals for my degree?"

apparently not, Blue. apparently not.

anyways, onto the math

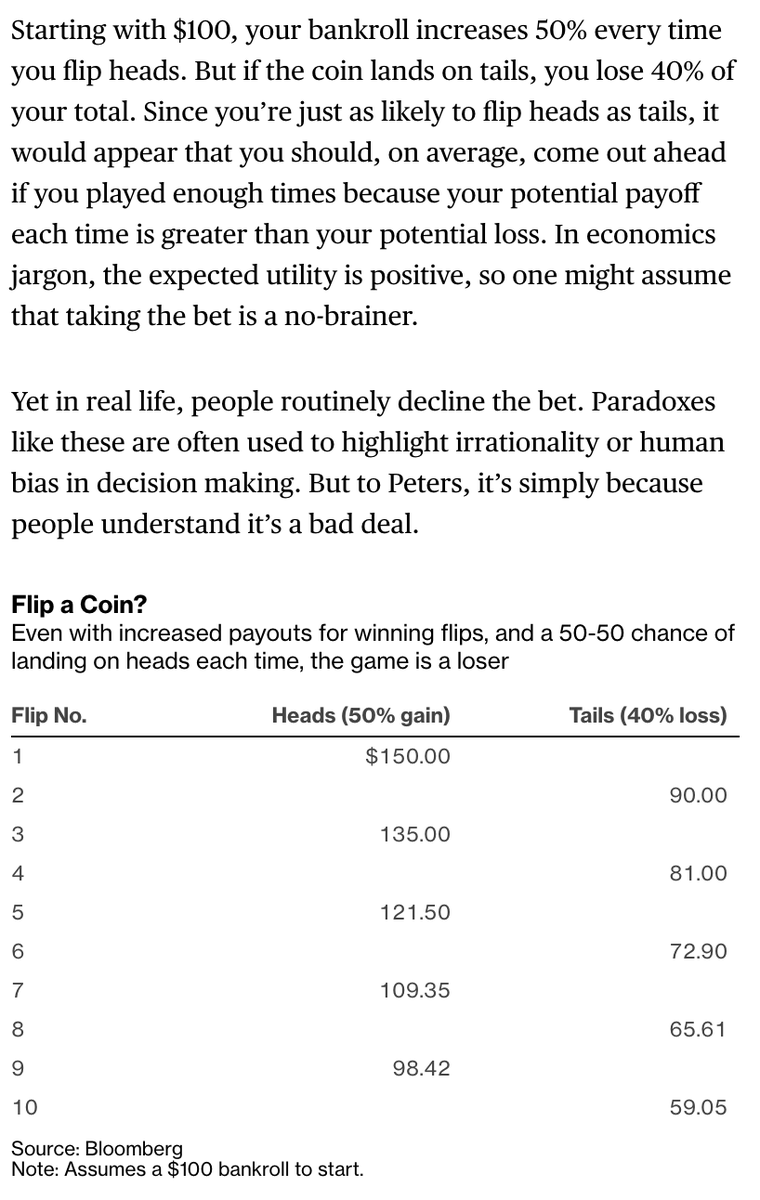

here's the parlor trick. read the problem below

"flip a coin. win the toss, gain 50%. lose the toss, lose 40%"

which do you take?

it relies off of people not realizing that to maintain stasis at 100%, your decay rate is bound by zero and growth rare bound by infinity

"flip a coin. win the toss, gain 50%. lose the toss, lose 40%"

which do you take?

it relies off of people not realizing that to maintain stasis at 100%, your decay rate is bound by zero and growth rare bound by infinity

most people look at this as "if i choose right, I get $50, and if I choose wrong, I lose $40. Easy choice!"

and in a one shot, that's not wrong.

but apparently because 0.5>0.4

economists are incapable of doing temporal analysis on repeated actions (flipping the coin 10 times)

and in a one shot, that's not wrong.

but apparently because 0.5>0.4

economists are incapable of doing temporal analysis on repeated actions (flipping the coin 10 times)

how do we reconcile this?

well, Peters thinks that we don't; that we sit there blindly and twiddle our thumbs thinking we're smart

but such a pithy model (of his own creation) wouldn't even trick and econ 101 high school student

or... most middle school algebra students

well, Peters thinks that we don't; that we sit there blindly and twiddle our thumbs thinking we're smart

but such a pithy model (of his own creation) wouldn't even trick and econ 101 high school student

or... most middle school algebra students

i know this because I literally asked my 13 year old brother to try to figure this out, and he had no problem with it whatsoever

when I asked him "why don't you always, over time, pick the gain of 50% vs loss of 40%" he couldn't put it into words well

I can

when I asked him "why don't you always, over time, pick the gain of 50% vs loss of 40%" he couldn't put it into words well

I can

100% maintenance of $100 is $100. But to compensate for a halving of something (50%) you have to have a doubling on the other end (200%)

it's a property of multiplication

the inverse to 25% if 400%, and 10% is 1000%

but the trick of the problem is that people are/

it's a property of multiplication

the inverse to 25% if 400%, and 10% is 1000%

but the trick of the problem is that people are/

thinking of it like an addition problem, when it's better seen through multiplication

they see "ADD 50%" and "SUBTRACT 40%

and don't realize that this also means

"MULTIPLY BY 1.5" and "DIVIDE BY 1.66"

these, are your actual utility growth factors

they see "ADD 50%" and "SUBTRACT 40%

and don't realize that this also means

"MULTIPLY BY 1.5" and "DIVIDE BY 1.66"

these, are your actual utility growth factors

simply, Peters has used a leading equation

∆x(win) = +0.5x p(win=.5

∆x(lose) = -0.4x p(lose=.5

can also be written as

∆x(win) = 1.5x p(win=.5

∆x(lose) = x/1.67 p(lose=.5

this is the problem with creating your own "utility" function and then demanding semantic obedience

∆x(win) = +0.5x p(win=.5

∆x(lose) = -0.4x p(lose=.5

can also be written as

∆x(win) = 1.5x p(win=.5

∆x(lose) = x/1.67 p(lose=.5

this is the problem with creating your own "utility" function and then demanding semantic obedience

I've referred to this several times as a "mathematical trick" because frankly, almost a decade ago now back in high school that's exactly how I learned this. I used it Freshman year in calc as a joke on a kid who very quickly caught on

this is simply semantic deception

this is simply semantic deception

using *1.5x and x/1.67 gives you perfectly valid temporal utility growth constants for your variables, but by framing it as addition and subtraction, it's harder to see

even as *1.5x and *0.6x some still get confused, but once you show the constant in relation to division/

even as *1.5x and *0.6x some still get confused, but once you show the constant in relation to division/

/for the decay variable (losing the coin toss) pretty much everyone gets the trick immediately.

Meanwhile, I can not name a single economic textbook that teaches repeated action games that would try to claim /that this

∆x(win) = +0.5x p(win=.5

∆x(lose) = -0.4x p(lose=.5

Meanwhile, I can not name a single economic textbook that teaches repeated action games that would try to claim /that this

∆x(win) = +0.5x p(win=.5

∆x(lose) = -0.4x p(lose=.5

formulation ALWAYS favors taking the bet in repeated action games, and that 0.5 > 0.4 as a static difference is all we need to conclude so

I challenge professor @ole_b_peters to find any college textbook that explains this improperly and screenshot it for all of twitter

I challenge professor @ole_b_peters to find any college textbook that explains this improperly and screenshot it for all of twitter

frankly, i'm amazed that a professor was able to slip this party trick that I've known about since high school into an academic paper, and think economists are going to take him seriously

i don't know why he thinks game theorists don't do repeated action games/

i don't know why he thinks game theorists don't do repeated action games/

/or why he thinks economists worship at the feet of ergodicity or why he thinks we don't know how to create utility functions that have useful temporal constants.

frankly, i think he should stick with assuming perfect spheres in vacuums, as that might be more his speed

frankly, i think he should stick with assuming perfect spheres in vacuums, as that might be more his speed

Read on Twitter

Read on Twitter