New paper out! We developed a new method to study confidence in perceptual decision-making without requiring explicit rating scales, and found clear deviations from Bayesian accounts of subjective confidence. https://www.nature.com/articles/s41562-020-00953-1

Why not use scales? In everyday life, confidence is often used to guide behaviour when feedback is sparse or not available, rather than reported onto a scale. These situations are not well captured by experiments with explicit ratings.

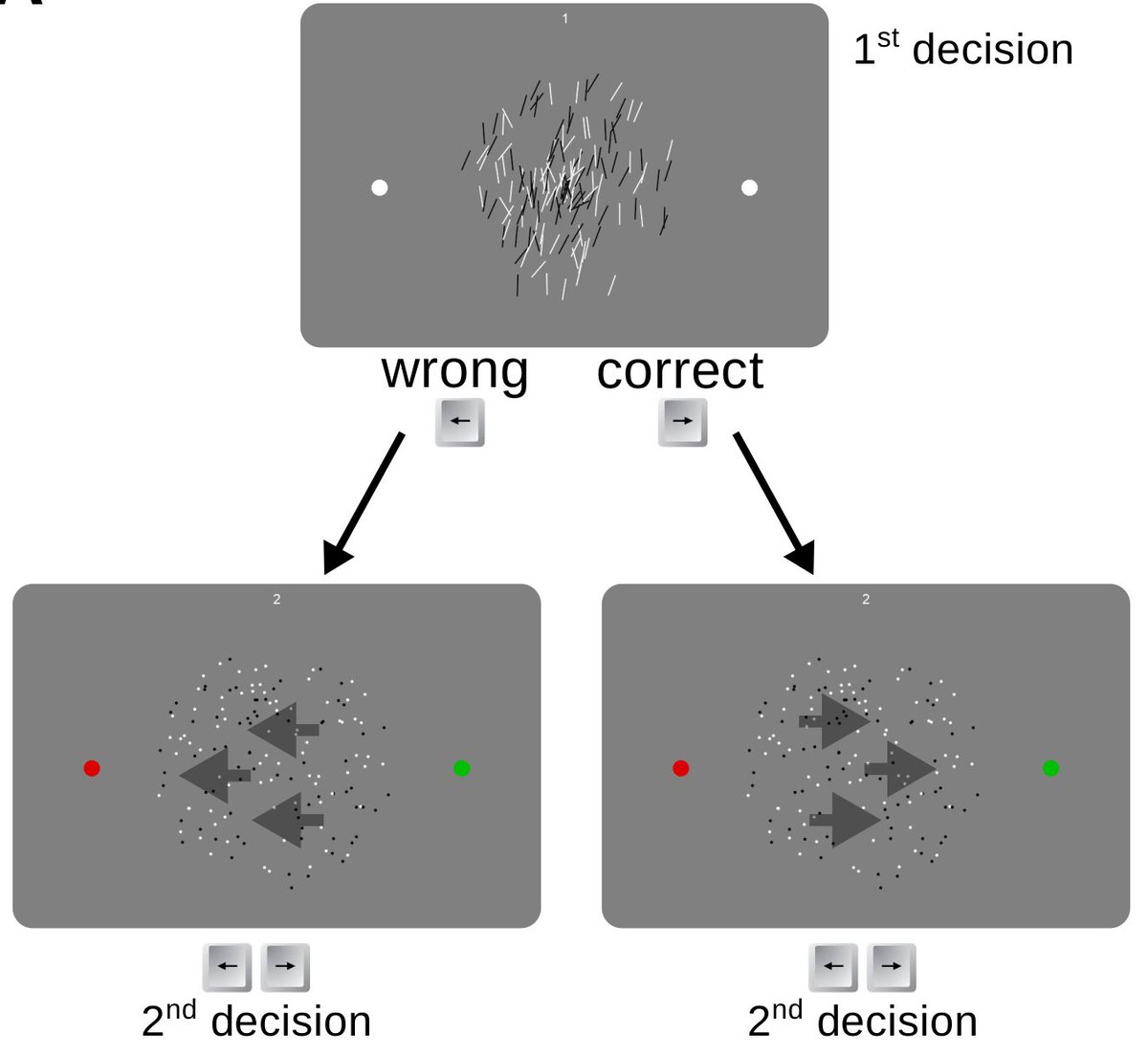

Our protocol mimics these situations with a sequence of 2 perceptual decisions, in which confidence in decision 1 gives the prior probability for the correct choice in decision 2.

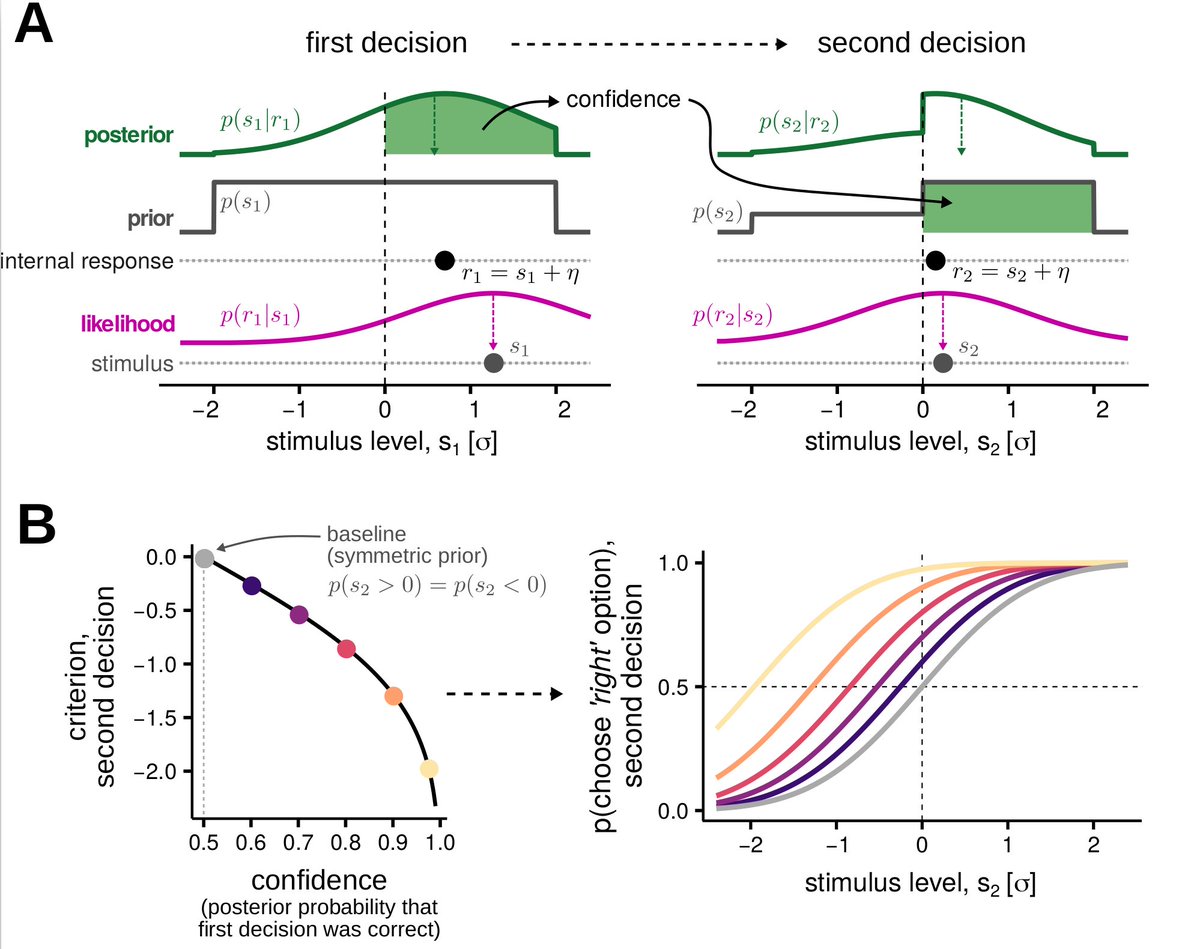

An ideal Bayesian observer facing this task would estimated the posterior probability of being correct (the confidence), and use it as a prior for the second decision.

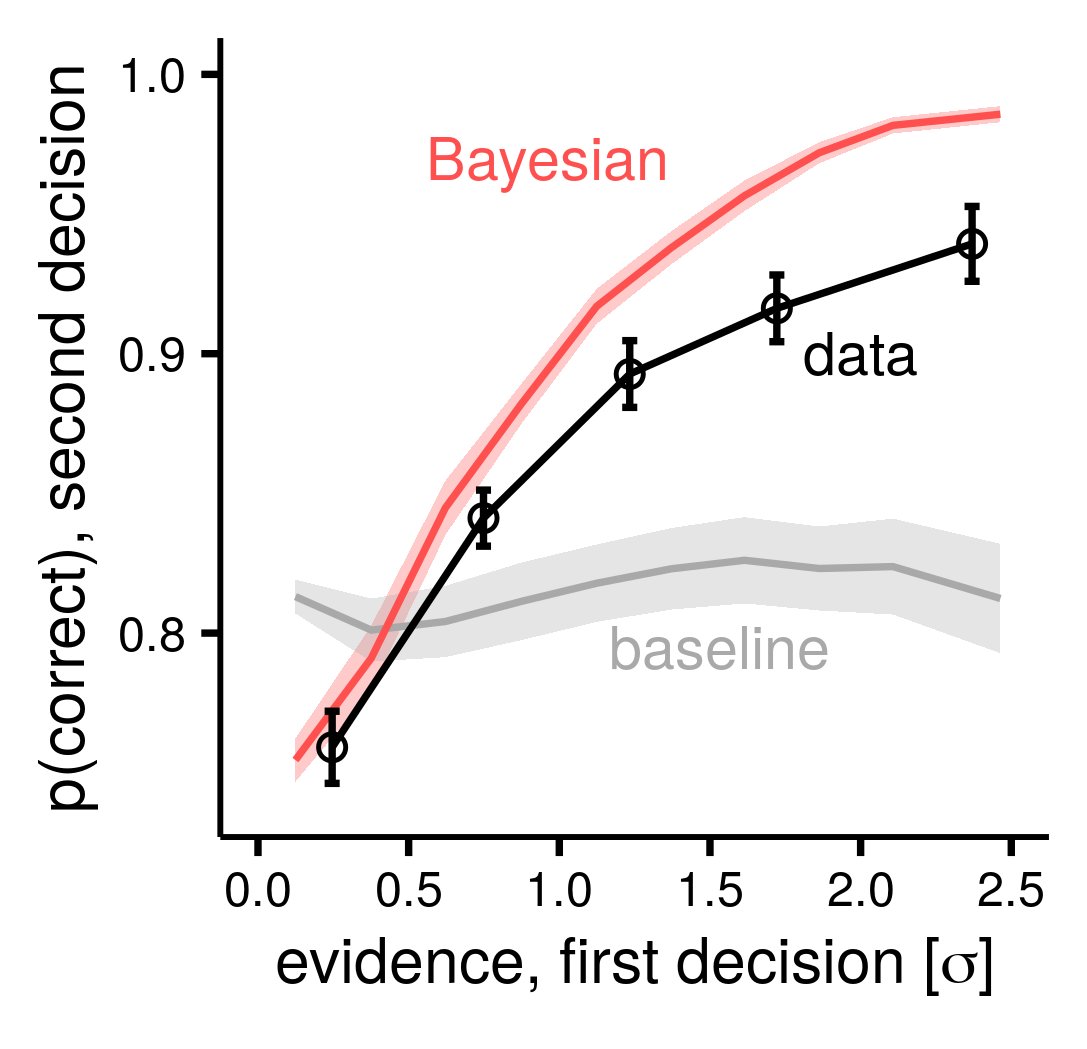

We find that people can use confidence to improve performance, however they fall short for the Bayesian benchmark. Here, replotted from the paper, is the p(correct) in decision 2 as a function of how easy was decision 1.

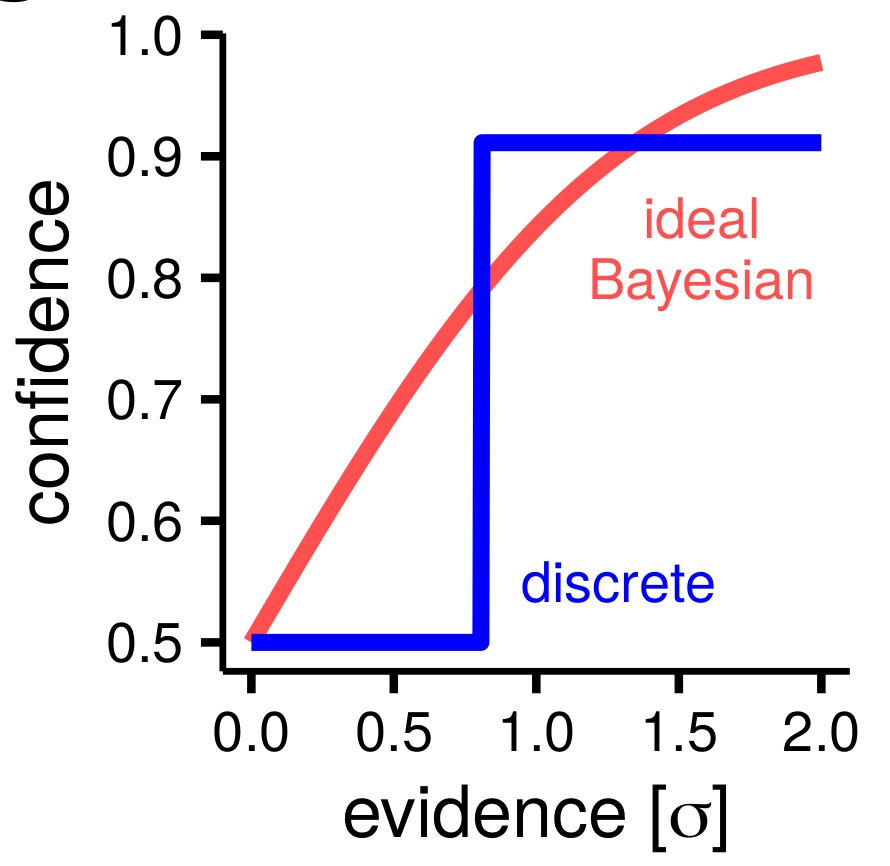

Surprisingly, the best description of observed behaviour was provided by a simple model in which confidence was discretized in two levels (uncertain vs confident) as if obtained by comparing a point estimate to a single confidence criterion.

This points to information loss in how confidence is formed from decision variables, akin to what proposed by second-order models of metacognition ( @smfleming)

Also, behind-the-paper blog post here: https://go.nature.com/32NvwZD

I should also mention that this project was completed only thanks to the indispensable help of @TesDekker and @GeorgiaMNeuro !

Full text link: https://rdcu.be/b7vG8

Read on Twitter

Read on Twitter